Disclaimer: This post has been translated to English using a machine translation model. Please, let me know if you find any mistakes.

Introduction

Whisper is an automatic speech recognition (ASR) system trained on 680,000 hours of multilingual and multitask supervised data collected from the web. Using such a large and diverse dataset leads to greater robustness against accents, background noise, and technical language. Additionally, it allows for transcription in multiple languages as well as translation of those languages into English.

Installation

To install this tool, it's best to create a new Anaconda environment.

InputPython!conda create -n whisperCopied

We enter the environment

InputPython!conda activate whisperCopied

We install all the necessary packages

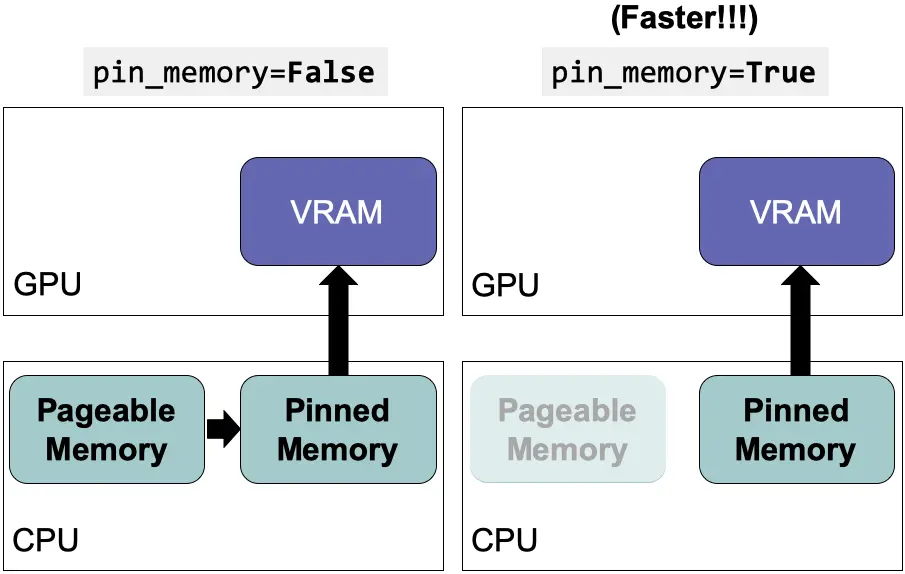

InputPython!conda install pytorch torchvision torchaudio pytorch-cuda=11.6 -c pytorch -c nvidiaCopied

Finally, we install whisper

InputPython!pip install git+https://github.com/openai/whisper.gitCopied

And we update ffmpeg

InputPython!sudo apt update && sudo apt install ffmpegCopied

Usage

We import whisper

InputPythonimport whisperCopied

We select the model, the larger it is, the better it will perform.

InputPython# model = "tiny"# model = "base"# model = "small"# model = "medium"model = "large"model = whisper.load_model(model)Copied

We load the audio from this old ad (from 1987) for Micro Machines

InputPythonaudio_path = "MicroMachines.mp3"audio = whisper.load_audio(audio_path)audio = whisper.pad_or_trim(audio)Copied

InputPythonmel = whisper.log_mel_spectrogram(audio).to(model.device)Copied

InputPython_, probs = model.detect_language(mel)print(f"Detected language: {max(probs, key=probs.get)}")Copied

Detected language: en

InputPythonoptions = whisper.DecodingOptions()result = whisper.decode(model, mel, options)Copied

InputPythonresult.textCopied

"This is the Micro Machine Man presenting the most midget miniature motorcade of micro machines. Each one has dramatic details, terrific trim, precision paint jobs, plus incredible micro machine pocket play sets. There's a police station, fire station, restaurant, service station, and more. Perfect pocket portables to take any place. And there are many miniature play sets to play with and each one comes with its own special edition micro machine vehicle and fun fantastic features that miraculously move. Raise the boat lift at the airport, marina, man the gun turret at the army base, clean your car at the car wash, raise the toll bridge. And these play sets fit together to form a micro machine world. Micro machine pocket play sets so tremendously tiny, so perfectly precise, so dazzlingly detailed, you'll want to pocket them all. Micro machines and micro machine pocket play sets sold separately from Galoob. The smaller they are, the better they are."